About Me

A Senior AI/ML Engineer & Data Scientist with 5+ years of experience building production-grade AI systems that deliver real business impact. My current focus is on designing and maturing AI agents, and agentic workflows, moving them from experimental prototypes to reliable, autonomous systems that operate at scale.

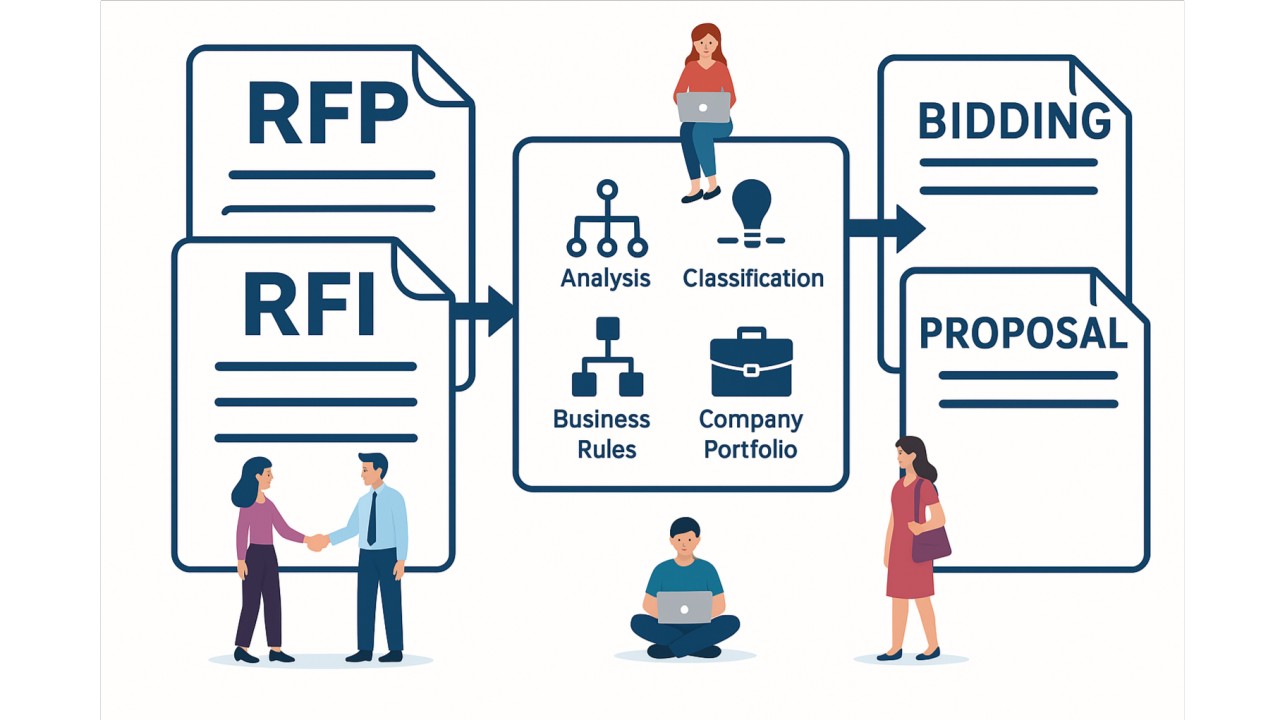

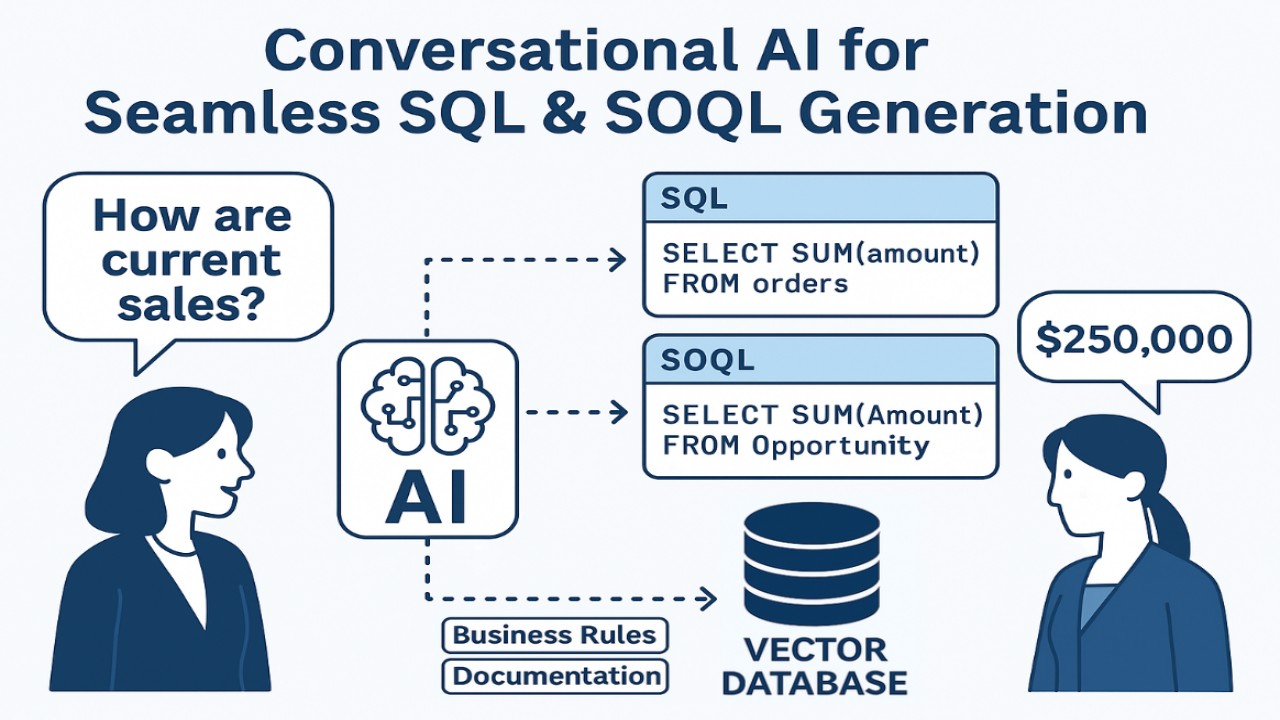

• My core expertise spans Agentic AI Systems, LLMs, Generative AI, MLOps, and Data Engineering, with deep hands-on experience across Healthcare, Legal, Finance, Logistics, Sales, and Customer Support.

• I've architected solutions for industry leaders including Motorola, Caliber Home Loans, and Logitech, where the measure of success was always business outcome, not model accuracy alone.

• I operate at the intersection of engineering and strategy, leading AI/ML teams, owning end-to-end pipelines from data to deployment, and working directly with stakeholders to turn complex AI capabilities into decisions that move the needle.

Outside of work, I follow AI research and industry trends closely and enjoy exploring new food spots, because great ideas, like great food, are worth seeking out.

If you're building something ambitious with AI, let's talk.

Skills

Experience

Education

Certifications

- Areas of Expertise

• Machine Learning & AI | Conversational AI & NLP Solutions | MLOps & Model Deployment | Data Modeling & Feature Engineering | GenAI & FinTech Solutions - Languages

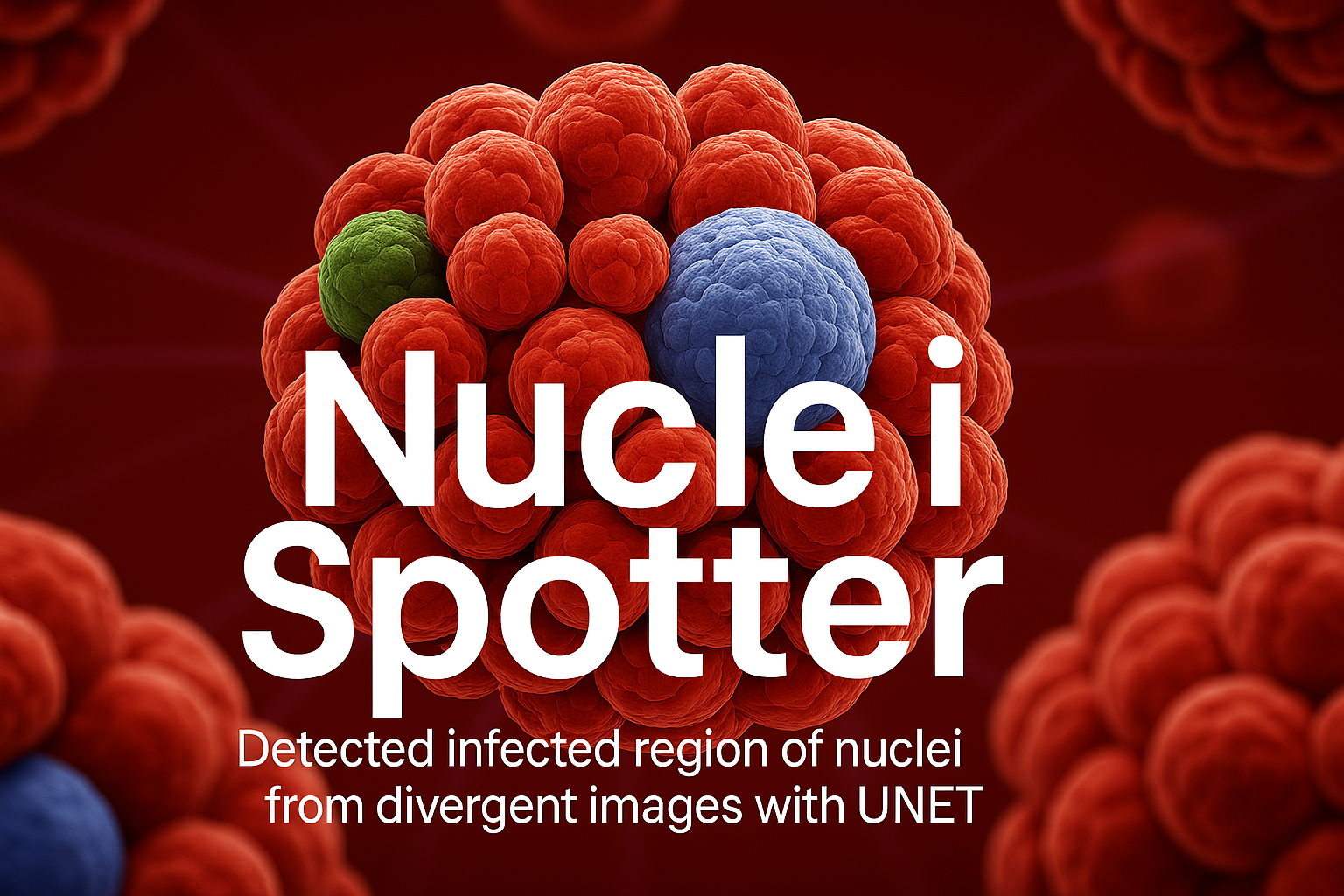

• Python, SQL, C/C++, Java, Bash - Machine Learning

• Classification, Regression, t-SNE, PCA, CNN, UNet, OpenCV, NLTK, SpaCy - Frameworks

• Sciki-learn, TensorFlow, PyTorch, Keras, SciPy, XGBoost, Hyperopt, Pandas, NumPy, Vaex, Dask, PySpark, MLflow, Streamlit - Data Science

• Statistical Analysis, Data Mining, Data Wrangling, Data Drift, Concept Drift, A/B Testing, T-Test - Generative AI

• OpenAI, Ollama, Deepseek, Cohere, Mistral, Gemini, Microsoft Phi, Vertax, LangChain, LlamaIndex, HuggingFace, Transformers, RAG (Naive, Rerank, Agentic), LangGraph, NL2SQL, Vector Database (Chroma, Qdrant, Faiss, Pinecone), PEFT, LoRA, DSPy, Explainable AI, Prompt Engineering, Bias Mitigation, Ragas - Database Management

• MySQL, SQL Server, PostgreSQL, MongoDB, Snowflake, Kafka, Salesforce SOQL, Oracle, Elasticsearch, MariaDB, NoSQL - Data Visulization

• Power BI, Matplotlib, Seaborn, Plotly, Dash - Cloud & DevOps

• AWS, Azure, GCP, Sagemaker, Bedrock, Databricks, Hadoop, AWS Lambda, Serverless, Docker, Kubernetes, Airflow, Git, Jenkins, SSH, Github Actions, Infrastructure-as-Code

- Mar 2022 - Present (Giggso Inc.)

Data Scientist - Dec 2020 - Mar 2022 (Giggso Inc.)

Junior Data Scientist - Aug 2018 - Feb 2021 (Fast NUCES University)

Teaching Assistant - Oct 2019 - Sep 2020 (Fast NUCES University)

Full Stack Intern

- National University of Computer & Emerging Sciences (NUCES)

Bachelor's of Computer Science - Government Graduate College

Intermediate in Computer Science

Finasys

Finasys